Tools – Indicators – Metrics: Data Quality in Computational Social Science

In the course of datafication, social scientists are confronted with the increasing availability of digital behavioral data (DBD). Social media platforms, mobile apps, and sensors offer rich insights into human behavior, but these new data sources come with significant challenges in terms of quality. To address these issues, the two-day workshop “Tools – Indicators – Metrics: Data Quality in Computational Social Science” (December 11 -12, 2024) brought together experts in the field to discuss ways to measure, assess, and improve the quality of digital behavioral data. The workshop was organized as part of the Competence Center Data Quality in the Social Sciences (KODAQS). It combined formats for expert inputs via keynote speeches, presentations of different case studies on existing tools and workflows, and interactive breakout sessions.

Im Zuge der „Datafizierung“ sind Sozialwissenschaftler*innen mit der zunehmenden Verfügbarkeit digitaler Verhaltensdaten (DVD) konfrontiert. Social-Media-Plattformen, mobile Apps und Sensoren bieten reichhaltige Einblicke in das menschliche Verhalten, aber diese neuen Datenquellen sind mit erheblichen Herausforderungen in Bezug auf die Qualität verbunden. Um diese Probleme anzugehen, brachte der zweitägige Workshop „Tools – Indicators – Metrics: Data Quality in Computational Social Science“ (11.-12. Dezember 2024) Expert*innen auf diesem Gebiet zusammen, um Möglichkeiten zur Messung, Bewertung und Verbesserung der Qualität digitaler Verhaltensdaten zu diskutieren. Der Workshop wurde im Rahmen des Kompetenzzentrums Datenqualität in den Sozialwissenschaften (KODAQS) organisiert.

DOI: 10.34879/gesisblog.2025.95

Data quality conceptions and KODAQS introduction

The organizing team (Yannik Peters, Katrin Weller and Jessica Daikeler) kicked off the workshop by presenting a new conceptualization of data quality and introducing the KODAQS project. In particular, the conceptualization of data quality into intrinsic and extrinsic dimensions was emphasized. While the intrinsic dimension refers more to the data accuracy, the extrinsic dimension focuses on its usability. A second distinction involved potential errors sources for the representation and measurement side of data quality. Measurement errors occur when a measurement is imprecise or invalid. Representation errors, on the other hand, arise when the sample is not representative of the population of interests. After clarifying the key terms of data quality, the KODAQS project was introduced. KODAQS is the Competence Center for Data Quality in the Social Sciences funded by the German Federal Ministry of Education and Research and the EU Next Generation fund. Its main goals are 1) to provide tools, services, and learning resources to assess and improve the quality of social science data and 2) to conduct cutting-edge research on this matter. The soft-launched version of the KODAQS Data Quality Toolbox, an educational resource for data quality assessment, was introduced, with a special focus on the first three DBD tools Delab (from Julian Dehne), TextPrep (from Yannik Peters and Kunjan Shah), and Valitext (from Lukas Birkenmaier).

Keynote speeches

With Valerie Hase (Communication Science, LMU Munich) and Christo Wilson (Computer Science, Northeastern University), the workshop featured two prominent keynote speakers who shed light on the quality of digital behavioral data from different perspectives. First, Valerie Hase proposed in her keynote “From awareness to action: Defining, Assessing, & Improving the Quality of Digital Trace Data” a process perspective for improving data quality highlighting eight key rules: 1) Plan ahead, 2) Combine methods for data collection, 3) Turn “found” to “designed” data where possible, 4) Statistically correct for errors, 5) Ask different questions, 6) Document everything, including errors, 7) Engage in community-based initiatives and 8) Push for infrastructural change. Christo Wilson, on the other hand, took a more use-case-centered approach in his keynote “Assessing Data Quality in Practice: A First Look at Trace Data from the National Internet Observatory”. The National Internet Observatory is a platform for large-scale and mobile-based data collection regarding human and platform behavior. It combines web tracking and scraping, surveys, and mobile app data collection based on participants’ informed consent. Christo Wilson provided detailed insights into the data collection process and identified four main challenges to guarantee data quality for their studies: 1) Attrition (participant retention), 2) Scammers (e.g. IP addresses outside the US or from well-known VPNs etc.), 3) demographic representativeness (e.g. certain ethnic groups, age groups, and education levels are over- or underrepresented), 4) Behavioral representativeness (NIO participants should be representative of user behavior on the internet, which is very difficult to measure). The keynote highlighted that ensuring data quality in large-scale, avant-garde data projects is a permanent, ongoing challenge, especially because not every data quality related problem can be anticipated in advance.

Khoury College of Computer Sciences

Northeastern University

Department of Media and Communication

LMU Munich

Collaborative break-out sessions

Besides presentations, the participants of our workshop engaged in two collaborative breakout sessions:

1) Measuring data quality: Do we need standard indicators?

2) Potential tools and workflows for data quality assessment

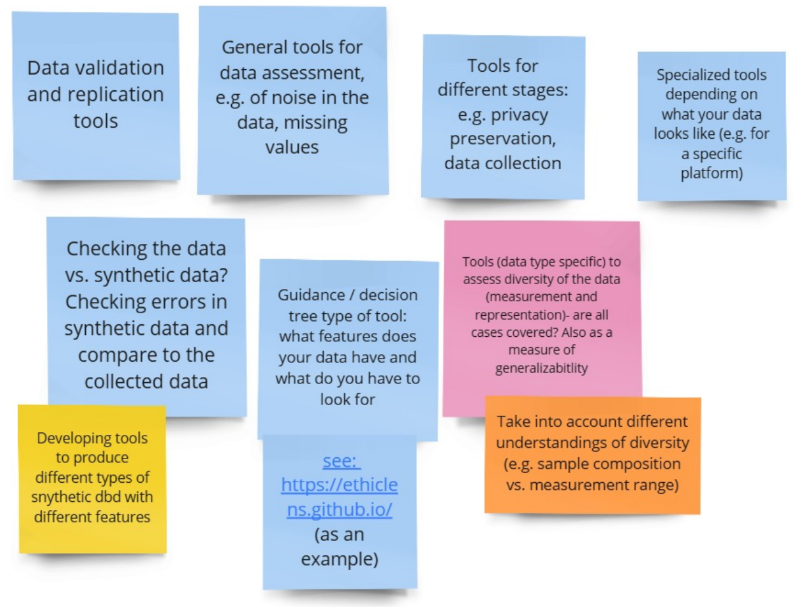

The first one explored the need for standardized indicators to measure data quality, especially for various data types such as social media content, sensor data, and web tracking. Against the backdrop of different use case scenarios, participants discussed and developed potential indicators of data quality. In the second breakout session, participants had a group discussion on how to implement indicators as tools. They also discussed missing data quality tools that they wish to have available for their research, e.g. a tool that is sampling information about a platform’s user base, one that helps to compare data sets of the same topic, an anonymization tool or deepfake detectors.

Selected case studies on existing tools or workflows

Additionally, the workshop featured three selected case studies: 1) Christopher Klamm, Ruben Bach, and Tornike Tsereteli (University of Mannheim) introduced the CARING project, demonstrating the importance of community-driven approaches in improving data quality. The initiative focuses on creating an open platform where users can collaborate to annotate, validate, and improve datasets. This approach highlights how community engagement can lead to more reliable data for researchers and data scientists alike. 2) Jan Schwalbach (GESIS) and Lukas Hetzer (University of Cologne) presented the ParlLawSpeech dataset, which consists of 33,738 bills, 22,449 laws, and nearly 3 million parliamentary speeches from seven European parliaments. They emphasized the need for use-case specific validation procedures, described how they conducted multiple validation rounds, and highlighted the amount of work and dedication that goes into producing such a high-quality data set. 3) Finally, Aditi Dutta (The Alan Turing Institute) and Rabiraj Bandyopadhyay (GESIS) presented a case study focused on detecting ambiguity in subjective tasks, such as sexism detection in online platforms. Their approach used a model-centric quality analysis technique called Pointwise V-Information (PVI), which helps identify ambiguous annotated data. By combining PVI with pre-trained models like BERT and RoBERTa, the team improved model performance, particularly in subjective tasks where labeling inconsistencies often occur.

Conclusion

With over 50 registered participants from more than 10 countries and across scientific disciplines the workshop demonstrated that the issue of the quality of digital behavioral data is highly topical, international and interdisciplinary. The KODAQS workshop highlighted that improving data quality for digital behavioral data does not mean finding one comprehensive solution, but rather requires considering the use case specifics, affordances, and nuances that come along with different scenarios. Key strategies for improving quality of digital behavioral data include the use of tools and indicators, proactive planning, combining multiple data collection methods, and being sensitive to demands that occur along the data collection process.

Really appreciated this post—super timely for anyone working with digital behavioral data. The distinctions between intrinsic vs. extrinsic quality, and representation vs. measurement errors, stood out to me. Thanks for sharing the emerging tools (like ValiText), workflows, and case studies. They’re exactly what we need to raise our standards in computational social science.